LSTM Inefficiency in Long-Term Dependencies Regression Problems

DOI:

https://doi.org/10.37934/araset.30.3.1631Keywords:

Recurrent Neural Networks, regression problems, Vanishing Gradient Problem, Long Short-Term Memory, long-term dependenciesAbstract

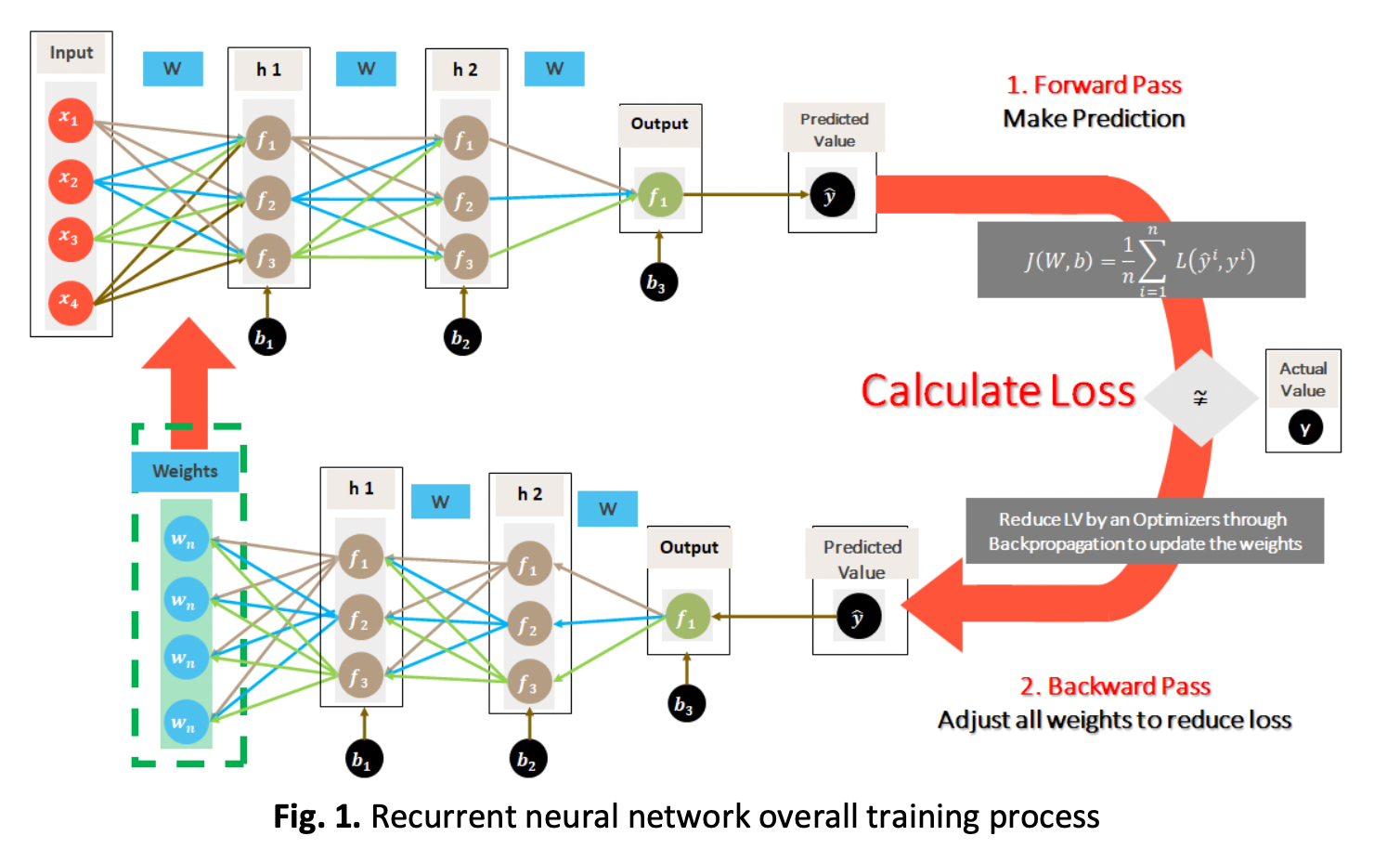

Recurrent neural networks (RNNs) are an excellent fit for regression problems where sequential data are the norm since their recurrent internal structure can analyse and process data for long. However, RNNs are prone to the phenomenal vanishing gradient problem (VGP) that causes the network to stop learning and generate poor prediction accuracy, especially in long-term dependencies. Originally, gated units such as long short-term memory (LSTM) and gated recurrent unit (GRU) were created to address this problem. However, VGP was and still is an unsolved problem, even in gated units. This problem occurs during the backpropagation process when the recurrent network weights tend to vanishingly reduce and hinder the network from learning the correlation between temporally distant events (long-term dependencies), that results in slow or no network convergence. This study aims to provide an empirical analysis of LSTM networks with an emphasis on inefficiency in long-term dependencies convergence because of VGP. Case studies on NASA’s turbofan engine degradation are examined and empirically analysed.

Downloads